TL;DR

A RAG-based AI copilot for customer support at Thailand's largest bank, helping 150+ agents cut ticket resolution time from 42 minutes to under 5. It retrieves customer info in real time, cross-checks internal bank policies, drafts suggested replies for agents to review, and auto-generates a ticket summary at the end of every conversation.

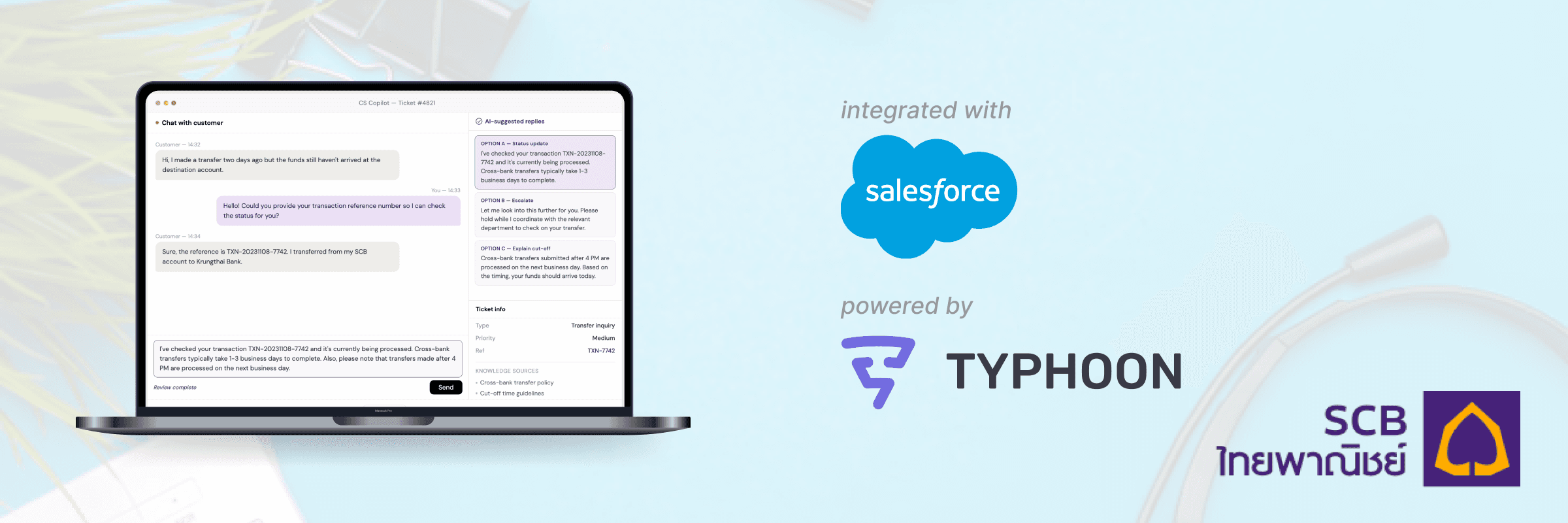

copilot chat interface

auto-generated ticket summary

Due to enterprise confidentiality, actual product screens can't be shared. These interactive mockups were built to demonstrate the experience. Conversations are translated into English.

The Problem

SCB 10X is the innovation arm of Siam Commercial Bank, Thailand's largest financial institution. Their customer support team handled thousands of inquiries daily, and most of the process was still manual.

- Slow resolution with scattered knowledge: A single ticket averaged 42 minutes. Policies lived in PDFs, wikis, and shared drives with inconsistent formats.

- No automatic conversation capture: Tickets had to be written up by hand after each chat, and sometimes information got lost, was inaccurate, or incomplete.

- Strict regulation and data privacy: Financial services sit under heavy regulation. Every design choice had to factor in compliance and data privacy protection act.

Context

This was late 2023, about a year after ChatGPT launched. RAG was a brand-new concept with no best practices and playbooks for building it in production.

The Solution

A RAG-based copilot that retrieves bank knowledge in real time, drafts suggested replies for agents to review, and auto-generates ticket summaries, so agents spend less time hunting for answers and more time talking to customers. We built it as a copilot, not a chatbot, because financial services regulation requires a human in the loop at every step.

Real-time knowledge retrieval

Semantic search across the bank's Salesforce knowledge base (policies, troubleshooting guides, regulatory docs) surfaces in context as the agent chats. Sources populate in the bottom-right of the screen so agents see exactly where each answer came from.

Reply suggestions

The copilot drafts three reply options per message, each grounded in retrieved sources. Agents pick one, edit, and send. Human in the loop at every step, as required in regulated financial services.

Auto-generated ticket summary

At the end of each conversation, the system writes a summary and next-step list back to Salesforce. No more forgotten or incomplete tickets.

Tech Stack

The RAG pipeline searched across the bank's entire knowledge base, everything from password reset guides to cross-bank transfer policies to Thai-specific regulatory edge cases.

LangChain

Orchestration & prompt routing

Pinecone

Vector DB for semantic search

LangFuse

Observability & monitoring

Salesforce

Knowledge base, CRM & output

PDPA-compliant PII redaction

Sensitive customer data was masked before anything hit the LLM, then re-injected into the final output. Compliant by design, with no raw PII ever leaving the bank's infrastructure.

Evaluation in a world with no playbook

There was no off-the-shelf way to evaluate RAG in late 2023, so we built our own: automated retrieval tests, LLM-based evaluation against a golden dataset put together with CS leads, LangFuse tracing for observability, and a structured feedback loop from the agents themselves.

The Impact

42min → <5min

average ticket resolution time

150+

agents using the copilot

87%

customer satisfaction score (CSAT)